|

In our example, we can ignore PC3−PC6, which contribute little (0.4%) to explaining the variance, and express the data in two dimensions instead of six. However, all the PCs are not typically used because the majority of variance, and hence patterns in the data, will be limited to the first few PCs. As additional PCs are added to the prediction, the difference in r 2 corresponds to the variance explained by that PC. A useful interpretation of PCA is that r 2 of the regression is the percent variance (of all the data) explained by the PCs. As expected, PC1 has the largest variance, with 52.6% captured by PC1 and 47.0% captured by PC2. We next transform the profiles so that they are expressed as linear combinations of PCs-each profile is now a set of coordinates on the PC axes-and calculate the variance ( Fig. We start by finding the six PCs (PC1–PC6), which become our new axes ( Fig. Let's now use PCA to see whether a smaller number of combinations of samples can capture the patterns.

For example, the projection onto PC2 has maximum r 2 when used in multiple regression with PC1. 2) between data and their projection and is equivalent to carrying out multiple linear regression 3, 4 on the projected data against each variable of the original data. Principal Component Analysis Method Step 1: Get some data Let us consider a simple arbitrary three dimensional data set. The PC selection process has the effect of maximizing the correlation ( r 2) (ref. In summary, both factor analysis and principal component analysis have important roles to play in social science research, but their conceptual foundations are quite distinct. This requirement of no correlation means that the maximum number of PCs possible is either the number of samples or the number of features, whichever is smaller. For example, projection onto PC1 is uncorrelated with projection onto PC2, and we can think of the PCs as geometrically orthogonal. The second (and subsequent) PCs are selected similarly, with the additional requirement that they be uncorrelated with all previous PCs.

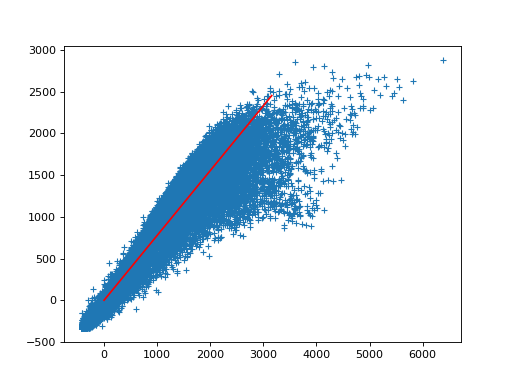

By minimizing this distance, we also maximize the variance of the projected points, σ 2 ( Fig. The first PC is chosen to minimize the total distance between the data and their projection onto the PC ( Fig. PCA reduces data by geometrically projecting them onto lower dimensions called principal components (PCs), with the goal of finding the best summary of the data using a limited number of PCs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed